Feature Stores, Vector DBs & the New Data Stack for AI

Gartner's 2024 AI survey: only 40% of AI prototypes reach production, with data availability the top barrier. The fix isn't a smarter model it's a better data infrastructure underneath it.

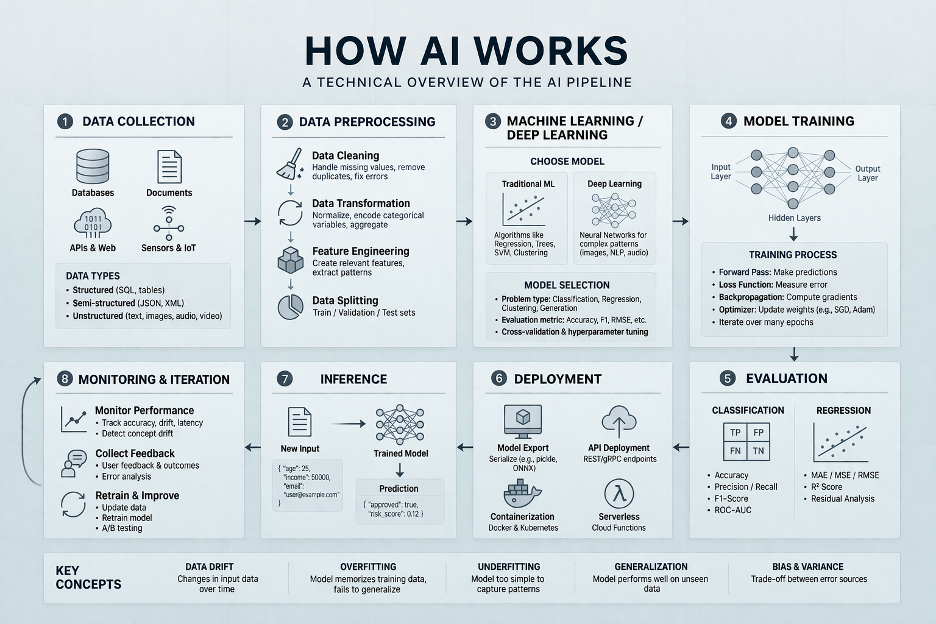

Machine learning systems are only as good as the data pipelines feeding them. Yet for years, ML teams stitched together ad hoc solutions that worked for experiments but collapsed under production load. According to the 2024 Gartner AI Mandates for the Enterprise Survey, approximately 40% of AI prototypes make it into production, with data availability and quality cited as the top barrier. The modern AI data stack exists to close that gap.

Feature Stores: Solving TrainingServing Skew

Feature stores like Tecton, Feast, and Hopsworks solve one of ML's most persistent problems: trainingserving skew. When a team computes features differently during training (batch SQL) vs. serving (realtime Python), models behave unpredictably in production. Feature stores create a single, versioned computation serving both contexts and enable feature reuse across teams, preventing duplicate engineering effort and creating a governed, searchable catalog of productionready features with lineage and ownership.

Vector Databases: The RAG Era

RetrievalAugmented Generation (RAG) has made vector databases (Pinecone, Weaviate, Qdrant, pgvector) mainstream. These systems store highdimensional embeddings and enable approximate nearestneighbor search at millisecond latency allowing LLMs to retrieve relevant enterprise context at query time, without retraining. The data management challenges are significant: keeping embeddings synchronized with source documents, managing embedding model versions, and ensuring retrieved context is current.

Data Products: Gartner's 2025 Hype Cycle Priority

Gartner's 2025 Hype Cycle for Data Management identified data products as "critical for data and analytics success" defining them as integrated, prepared data assets that are "findable, trusted, selfcontained and certified for reuse." The data product model formalizes the interface between data engineering and ML consumers, creating explicit contracts about schema, freshness, completeness, and statistical properties. When a contract is violated, the ML pipeline fails fast rather than silently degrading model performance.

The Infrastructure Imperative

Gartner's research consistently finds that legacy data infrastructure is the primary constraint on AI ROI companies on legacy stacks spend materially more on AI projects while achieving lower success rates. Gartner also predicts 70% of organizations will adopt modern data quality solutions by 2027 specifically to support AI adoption and digital business initiatives. The solution is foundational: governed, accessible, highquality data that ML teams can discover and use without waiting weeks for access provisioning.

Building the Stack

· Adopt a feature store before model proliferation retrofitting it afterward is extremely painful

· Monitor for data drift and feature distribution shifts, not just model performance metrics

· Treat embeddings as versioned data assets requiring lineage and staleness tracking

· Formalize data contracts between upstream producers and ML consumers before training begins

· Instrument training pipelines to log data statistics alongside model metrics